(This is the forty-fourth entry in The Modern Library Reading Challenge, an ambitious project to read the entire Modern Library from #100 to #1. Previous entry: The Age of Innocence.)

Very few people read Ford Madox Ford (née Ford Madox Hueffer) these days and there are salubrious and soul-preserving reasons why this is so. He’s the rare “great writer” who has as much relevance in the twenty-first century as some huckster trying to sell you an anti-garroting cravat. Parade’s End is ostensibly about the psychological effects of World War I, but, even on my second read of this plodding tetralogy, I found myself revisiting Ernst Jünger’s Storm of Steel, Richard Aldington’s Death of a Hero, Paul Fussell’s The Great War and Modern Memory, and even Rebecca West’s underrated The Return of the Soldier — all far more poignant and perspicacious volumes into how the Great War shaped human history. If anything, Parade’s End is an unwelcome reminder that the crusty white dudes who concocted the Modern Library canon were more motivated by what certain insiders deemed “great literature” rather than bona-fide literary standards.

Of course, I am merely one man on a literary journey — perhaps, more accurately, an asshole on the Internet with an opinion. Even so, when I posted a photo on my Instagram story of me wincing while holding up the Ford omnibus (complete with the unanticipated aesthetic touch of a bandage taped to my forehead, the result of a shaving accident rather than a sustained session in which I repeatedly banged my noggin against a stop sign pole, although my head often felt more like the latter while reading Parade’s End), a friend pointed out that she had indeed suffered with me when she had taken a stab with Ford. Her surprise revelation, delivered in a dramatic “Luke, I am your father” timbre, helped me to feel less alone. Yes, Ford Madox Ford had been dead for a good eighty-seven years and was thus an easy target for a cranky middle-aged bald dude like me who really wanted to write about Kerouac or Faulkner rather than this guy. But our bond of friendship became tighter, cemented in some shared reading agony that we had not hitherto known about. We shook our fists into the air and condemned the dreaded surname “Tietjens.”

You see, only the stodgiest tastemakers imaginable would have the stonecold cruelty to recommend this dull and insufferable perorater to some starry-eyed young reader hoping to secure a foothold in the daunting Modern Library canon. It’s especially aggravating that I have to deal with Ford not once, but twice, on this infernal list. Yes, I am fated to suffer through The Good Soldier when we get to ML 30. I am not happy about this. But I did take a ridiculous literary oath fifteen years ago and I am a man of my word. This slimmer and more potent novel is admittedly a bit better than Parade’s End — “better” in the way that getting swiftly kicked in the balls is preferable to having a limb sawed off.

But as a graying though exuberant Gen Xer, I am duty-bound to tell any enthusiastic Zoomer or tap-dancing millennial that they would be better off watching Sam Levinson’s exploitative television series Euphoria than reading Ford Madox Ford. It can be sufficiently argued that the only reason that the 2012 BBC television adaptation of Parade’s End was memorable at all is because the brilliant Tom Stoppard (may he rest in peace) rearranged the events and added new scenes and dialogue — most tellingly taking on an “unfaithfully faithful” approach.

Who reads this man today? Perhaps a few superannuated Ford stans can be found whispering “Tietjens” as they slap their fading chits onto lonely numbers in moribund bingo hall parlors swarming with smoke. But I cannot in good conscience join this gloomy coterie of literary losers. These Ford boosters have so deluded themselves into blinkered advocacy that even Julian Barnes had the startling temerity to declare that Ford made Graham Greene, who rightly called out Last Post as “an afterthought,” look old-fashioned. Seriously bro?

Gentlemen don’t earn money. Gentlemen, as a matter of fact, don’t do anything. They exist. Perfuming the air like Madonna lilies. Money comes into them as air through petals and foliage. Thus the world is made better and brighter.

Compare this with Greene in The Quiet American and you see a significant difference between dowdy bloviating and taut observational precision:

A man open to bribes was to be relied upon below a certain figure, but sentiment might uncoil in the heart at a name, a photograph, even a smell remembered.

Christopher Tietjens — intended to reflect the dying gasps of an Edwardian age only now appreciated by husky buzzards pinching tobacco in glum dens boxed by dowdy leatherbound walls — is surely among the least interesting protagonists in 20th century literature. He is the “last Tory” — a clumsy, blunt, physically gargantuan, and very rude statistician incapable of a jocular remark or a jolly jolt in his gait. Ford banged out pages and pages of leaden dialogue from this insufferable mansplainer. And his observations possess all the pleasure of a two hundred pound steel weight being thrown repeatedly into your solar plexus, presumably with some automated Peloton instructor shrieking into your eardrums with the unsettling tenor of an ICE agent preparing to murder someone in broad daylight. “You betray your non-Anglo-Saxon origin by being so vocal…And by your illuminative exaggerations!” “Little nippers like you don’t stop things….Besides, feel the wind!” The ellipses falsely suggest a free associative genius, but only succeeded in reducing me to unintended laughter. (How else was I supposed to soldier through this book? With a stern and self-immolating chin?) Ford actually presages many of these hokey lines with a breezy “Tietjens said:” in the paragraph before just so he can pad out his pages with the unpersuasive momentum of an undergrad trying to hit the 1,000 word minimum on the essay due the next morning.

Tietjens is often outlined as brilliant, but Ford’s descriptive approach is all tell and no show:

The fly that took them back went with the slow pomp of a procession over the winding marsh road in front of the absurdly picturesque red pyramid of the very old town.

Now even someone with a particularly acute case of ADHD will look to such a sentence and see that it is trying way too hard to sound “literary” when it doesn’t say much of anything at all. What are we supposed to dwell on here? The putter of the prewar Smith Flyer? Well, we don’t actually feel its spurts or its undulations in this sentence. But we do have the sense that the fly here is in “slow pomp,” or on display. Which doesn’t really conjure up a distinct image. Okay. Fine, Ford. You do you. But how does the “winding marsh road” contribute to the imagery? Does it serve in some juxtaposition to the fly? Of course not. The road image is used to get us to “an absurdly picturesque red pyramid” that stands in apparent counterpoint to “the very old town.” But what kind of red pyramid? How is it picturesque? Again, we don’t know. And it’s utterly maddening. Ford never describes this “pyramid” again. This isn’t poetic at all. It’s word salad. Ford delivers elegant variations that aren’t even interesting in the manner by which they fail — such as “an extraordinary Falstaff’s battalion of muddy odd-come shorts.” Ford stitches together random phrases, but he only succeeds in giving us disorienting incongruities that read more like some well-read guy tagging along with you on a drunken night in Vegas, though without the pleasure of slot machine clinks or a comely stranger you accidentally hook up with. (Hey, what happens in Vegas stays in Vegas!)

And there’s more hapless syntax where that came from, folks! Should I mention the shadow “falling across the bar of light that the sunlight threw in at his open door” or how a fellow named McKechnie “swallowed as men are said to swallow who suffer from hydrophobia”?

By contrast, here is P.G. Wodehouse describing a golf course at the beginning of “A Woman is Only a Woman”:

It is a vantage-point peculiarly fitted to the man of philosophic mind: for from it may be seen that varied, never-ending pageant, which men call Golf, in a number of its aspects. To your right, on the first tee, stand the cheery optimists who are about to make their opening drive, happily conscious that even a topped shot will trickle a measurable distance down the steep hill. Away in the valley, directly in front of you, is the lake hole, where these same optimists will be converted to pessimism by the wet splash of a new ball. At your side is the ninth green, with its sinuous undulations which have so often wrecked the returning traveler in sight of home. And at various points within your line of vision are the third tee, the sixth tee, and the sinister bunkers about the eighth green—none of them lacking in food for the reflective mind.

This is infinitely more enjoyable than Ford — and not just because Wodehouse is almost always a delight to read. Wodehouse maps out the territory and the types to be found in his setting and he somehow turns golfing — which I myself have never enjoyed outside of the brilliantly designed miniature courses that you find all throughout Ocean City, Maryland — into a joyfully absurd reverie for optimistic philosophy. Where Ford is muddled and confused, Wodehouse is clear and specific, complete with phrases like “wet splash of a new ball.” It is the difference between being stuck at a party with a bitter and rambling old man who gave up on finding felicity at least two decades earlier and a giddy eccentric who plans to cut the rug well into old age — or, at least, as long as his legs will two-step.

Ford Madox Ford was all influence and overhyped “talent.” It’s certainly no accident that the great Jean Rhys devoted her ferocious (and far more gifted) energies to savaging this bombastic tyrant in Quartet — revenge from Rhys after a protracted carnal entanglement. You see, there’s an undeniably skeeze quality to Ford both on the page and in his life. While serving as editor of The Transatlantic Review, Ford used his outsize literary influence to serve as a “literary mentor” to young writers (ahem, young women) decades younger than him. He would publish their early stories and exact a boudoir form of remuneration. This was a less enlightened time in which literary men in positions of power could not be held accountable. And these poor women had to endure Ford’s selfish clit-ignorant thrusts while lying back and thinking of publication. After Ford was rightfully spurned by Violet Hunt (she spent eight ghastly years with Ford; Ford, meanwhile, was cheating on his wife), Ford ruthlessly caricatured her not once, but twice — as Florence Dowell in The Good Soldier and, with evermore preposterous misogyny, as the philandering Sylvia Tietjens (Christopher’s wife) in Parade’s End. Sadly, this was what passed for eminence grise among writers in the early 20th century.

It’s safe to say that, like many clueless male authors who preceded him, Ford could not write women especially well. And this extends to Valentine Wannop, the young suffragette who Tietjens falls for. At the beginning of A Man Could Stand Up–, the third book of Parade’s End, Ford not only has Valentine confused about how to use the telephone, but portrays her dreaming of doing nothing more than eating “pomegranates beside an infinite washtub of Recklitt’s blue.” Recklitt’s Blue, for those of you who aren’t familiar with 19th century household items, was a laundry whitener that helped brighten the hues of your clothes. Despite the fact that we are introduced to Valentine as a free-spirited suffragette in the first volume (unsurprisingly, her best moments in the tetralogy), she not only longs to perform domestic duties, but she complains that she is so alone and lives a “nunlike” existence (“And no one had ever wanted to marry her….No one even had ever tried to seduce her.”).

Now anybody even remotely familiar with the suffragettes knows that they were hardly reticent when it came to passion and sex, either with the men who dated them, with each other, or, quite frequently, as they learned to “control their passions” through the power of theoretical abstinence. (You’d honestly be better off hearing about the suffragettes from Diane Atkinson’s Rise Up, Women than from Ford Madox Ford the chronic mansplainer.) But in reading Parade’s End, we don’t get any real sense of the richer life that Ford was perhaps too incurious or incompetent to draw from. James Longenbach has observed that Ford “took pleasure in feeling more qualified to diagnose the problems with women than women themselves.” (And for anyone who wants to do a deep dive, Reconstructionary Tales, a blog run by Paul Skinner, has a great overview of Ford’s conflicted feelings about the suffragettes.)

My eyes winced with incredulity as Tietjens berated Valentine Wannop for being slightly inaccurate quoting a passage. My soul plummeted into a protective crouch as I realized that Tietjens’s knowledge of the world was largely gleaned from memorizing passages in books with lifeless exactitude and even by reading the encyclopedia in alphabetical order. Almost every time a man showed up in a scene dominated by women, he would flap his lips and I felt very much like Fiona Apple trapped in a bar with the coked out and garrulous egotist Quentin Tarantino.

And here’s the thing. Valentine sees very clearly what a fool Tietjens is:

She knew that his poor mind was empty of facts and of names; but her mother said she was of great help to her. Once provided with facts his mind worked out sound Tory conclusions — of quite startling and attractive theories — with extreme rapidity. This Mrs. Wannop found of the greatest use to her whenever — though it wasn’t now very often — she had an article to write for an excitable newspaper. She still, however, contributed to her failing organ of opinion, though it paid her nothing.

Since I’ve been lambasting the man at length, I’ll give Ford a few modest props for this more clear-eyed passage, but this still circles back to one of my primary gripes about Parade’s End. Obviously, Ford possessed enough cognizance to understand how “modern” women of that era were juggling love and career. But Ford, like many mediocre men before him, is largely incurious about the latter and vitiates what talent he possessed by saturating himself in the former. Hell, even Samuel Richardson’s Clarissa — a massive masterpiece that happily occupied weeks of my reading time last year — showed far more curiosity about the inner life of a young woman than Ford did in Parade’s End. And that’s also accounting for the 18th century’s comparatively more atavistic genuflection to the patriarchy.

Furthermore, activists are fiercely loyal to their causes, particularly if they protest (as Valentine does) on a daily basis. Near the end of Some Do Not…, there is a lengthy section in which Valentine agrees to become Tietjens’s “mistress” — although nothing is consummated — and we are beholden to what is arguably the most pathetic male fantasy on the Modern Library list: namely, Valentine’s fawning admiration for the “poor mind” of this hopeless dullard.

* * *

The first of the Parade’s End books was published two years after Ulysses and T.S. Eliot’s The Waste Land, a year before Virginia Woolf’s Mrs. Dalloway, and while Proust was five books into In Search of Lost Time. These modernist geniuses served up poetic passages about consciousness. They used abstract prose in thrilling and experimental ways to unpack the crags and nuances of human existence. Ford, by contrast, has merely given us clunky prose in which the reader is randomly deposited into a new time and location. Upon solving any “riddle” that Ford presents us, the answer itself proves to be disappointingly pedestrian. It really doesn’t help that Ford is fond of having his characters repeat the title of his novel. “No more parades” obviously means that the pomp and circumstance of established social routines derived from the old social order aren’t exactly going to be practiced after machine guns, mustard gas, and trench warfare demonstrated the truly monstrous possibilities of humankind. And the Groby Great Tree cut down at Tietjens’s estate is more of a blunt and obvious metaphor of the old order collapsing rather than anything especially profound. Ford then commits the grave sin of not only believing himself to be much more clever than he really is, but in giving us a clunky journey in which we increasingly do not care about the destination.

Those who have praised Ford have done so on the basis of what I would deem “consciousness presented through continuous partial attention.” Yet they hold their tongues at the extremely contrived setups throughout the work. Mr. Duchemin, for example, goes insane without any specific reason. There is one particularly awful scene in Some Do Not… in which the “brilliant” Tietjens dukes it out with a banker over a series of overdraft charges.

Oh, but all the confusion and contradiction! The stuff of true literature! It’s why Parade’s End is on this list! Well, I see these more as significant liabilities rather than virtues. I simply cannot believe that Parade’s End was carefully designed as an invaluable modernist contribution — in large part because Ford, at times, doesn’t seem to know Tietjens. We learn, for example, that Christopher has two brothers (one that appears on page) in Some Do Not…. By the time we get to No More Parades, Christopher conveniently has a sister, who has previously been unmentioned. If this is the “inner life” meant to hammer home the conflict between the old and the new social orders, then I’d rather soak in a season of Bridgerton. But, hey, at least I’m now free of this dreadful opus. Don’t worry. I’m quite fond of the next few titles and I’ll behave myself slightly better in future installments!

Next Up! Dashiell Hammett’s The Maltese Falcon!

All historical reconsiderations have to start somewhere. And before C. Vann Woodward combed fastidiously through newspapers to change our perception of Jim Crow, he had to unseat a formidable (and wrongheaded) standard.

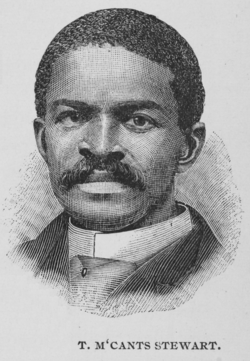

All historical reconsiderations have to start somewhere. And before C. Vann Woodward combed fastidiously through newspapers to change our perception of Jim Crow, he had to unseat a formidable (and wrongheaded) standard. Woodward observed that, as late as 1885, T. McCants Stewart, a Black lawyer and journalist and a close friend of Booker T. Washington, journeyed to his homestate of South Carolina to see how life was shaking out after not stepping foot there for ten years. (Stewart’s extraordinary reporting, published on

Woodward observed that, as late as 1885, T. McCants Stewart, a Black lawyer and journalist and a close friend of Booker T. Washington, journeyed to his homestate of South Carolina to see how life was shaking out after not stepping foot there for ten years. (Stewart’s extraordinary reporting, published on

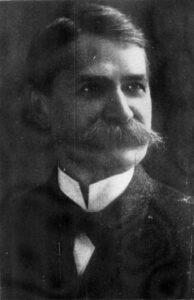

On January 25, 1898, the Charleston News and Courier published an item of devilish satire in response to a Jim Crow law then under consideration by the South Carolina Legislature. Much like people in 2024 never believing that ICE would become a massively budgeted paramilitary force randomly shooting American citizens and kidnapping taxpaying immigrants without due process, it then seemed unthinkable to call for railroad cars to be segregated by race. And so a shit-stirring editor by the name of

On January 25, 1898, the Charleston News and Courier published an item of devilish satire in response to a Jim Crow law then under consideration by the South Carolina Legislature. Much like people in 2024 never believing that ICE would become a massively budgeted paramilitary force randomly shooting American citizens and kidnapping taxpaying immigrants without due process, it then seemed unthinkable to call for railroad cars to be segregated by race. And so a shit-stirring editor by the name of  Unfortunately, the Charleston News and Courier was taken over by a different editor (

Unfortunately, the Charleston News and Courier was taken over by a different editor (

Sixty-three years after The Rise of the West‘s publication, it’s easy to take William McNeill for granted. You see, for many years, scholars widely accepted the worldview, one remarkably myopic in hindsight, that civilizations were not influenced by other civilizations. Perhaps this casual and subconscious strain of passive xenophobia was all the rage among upper-crust academics because, for several decades, these historians had walked the earth wearing too much tweed.

Sixty-three years after The Rise of the West‘s publication, it’s easy to take William McNeill for granted. You see, for many years, scholars widely accepted the worldview, one remarkably myopic in hindsight, that civilizations were not influenced by other civilizations. Perhaps this casual and subconscious strain of passive xenophobia was all the rage among upper-crust academics because, for several decades, these historians had walked the earth wearing too much tweed.

For the next two decades, historians stopped being social, fearing that vital scholarship would be curtailed by sudden violence until they were able to isolate tweed as the common factor. But time passed and the historians learned how to be gregarious while showing greater caution in the amount of tweed they wore on any given week. Thus, the troubling backwards thesis in which historians — egged on by Toynbee — believed that civilizations did not communicate and exchange ideas when caravans passed each other on a trade route remained.

For the next two decades, historians stopped being social, fearing that vital scholarship would be curtailed by sudden violence until they were able to isolate tweed as the common factor. But time passed and the historians learned how to be gregarious while showing greater caution in the amount of tweed they wore on any given week. Thus, the troubling backwards thesis in which historians — egged on by Toynbee — believed that civilizations did not communicate and exchange ideas when caravans passed each other on a trade route remained.